Best AI Orchestration Tools in 2026: Enterprise Evaluation Guide

80% of Fortune 500 companies now run active AI agents built with low-code and no-code tools. However, most of those deployments still lack unified governance, deterministic process control, and measurable outcomes. That gap between adoption and accountability is creating executive pressure: 71% of CIOs must prove AI value by mid-2026 or face budget cuts. Closing the distance between deployed agents and provable results requires orchestration.

If you're building the internal business case for an orchestration system, the sections below define the evaluation criteria that distinguish enterprise-grade options from the rest and provide a comparison framework across four tool categories.

What Is AI Orchestration?

Search for "AI orchestration," and you'll find developer frameworks, enterprise workflow systems, cloud-managed services, and small and mid-sized business (SMB) automation tools all using the same label. Those categories solve different problems and entail different operating trade-offs.

The market is in the midst of an agentic pivot, as AI shifts from experimentation to orchestration. That shift is why categorization matters: buyers evaluating orchestration tools need to know which category matches their operating model before comparing features.

For enterprise buyers, the practical taxonomy breaks into four categories.

- Developer frameworks: Code-first tools for engineering teams building custom AI applications. Maximum flexibility, maximum build investment.

- Enterprise workflow systems: Tools that coordinate AI agents, human decisions, and business rules across existing enterprise systems with built-in governance. Evaluated in terms of process orchestration, integration and connectivity, and agentic capabilities.

- Cloud-native agent services: Managed services tied to a specific cloud environment.

- SMB automation tools: App-to-app workflow automation with AI features for teams without dedicated IT resources.

The most common evaluation mistake is comparing tools that solve different problems against the same criteria. Identifying the right category first prevents that.

Enterprise Evaluation Criteria for AI Orchestration Tools

Five criteria separate systems meant for Fortune 500 operating environments from tools that may look strong in a demo but prove impossible to govern and scale in production. Skipping any one of them tends to surface as a blocker after deployment, not before.

Deterministic Process Control

A finance workflow or compliance check needs to produce the same result every time, regardless of which AI model generated an intermediate recommendation. Without deterministic control, outcomes become unreliable: the 58% success rate for generic large language model (LLM) agents in multi-turn enterprise conversations shows why deterministic process logic is a hard requirement. Rules and workflow logic need to execute consistently while AI agents handle reasoning where it adds value.

Human-in-the-Loop By Design

Configurable thresholds that route high-stakes decisions to human reviewers are a core requirement. Without them, a misconfigured agent can approve transactions or trigger escalations at scale before anyone intervenes.

Full Auditability

Every agent action should be logged with the full context of what data was accessed, what decision was made, why, and who approved it. Enterprise buyers often need audit trails that support the Sarbanes-Oxley Act (SOX), HIPAA, and GDPR requirements.

Data Sovereignty

For regulated industries, deployment and data controls are core evaluation criteria. Any orchestration system that replicates or stores data outside the customer's environment introduces compliance risk that scales with adoption.

30 to 60 Days to Value

The four criteria above address governance and compliance. This fifth criterion addresses execution: enterprise AI initiatives often stall in pilot phases, so time to production is a hard evaluation criterion rather than a nice-to-have. Ask vendors for specific operational milestones, not only demo capabilities.

These five criteria apply regardless of which tool category you evaluate. The sections describe each of the four tool categories and assess how they align with or diverge from these enterprise requirements.

Developer Frameworks

Developer frameworks are code-first tools for engineering teams building custom AI agent applications. Tools in this category, such as LangGraph, CrewAI, and AutoGen, support graph-based workflows, iterative loops, conditional paths, and custom execution behavior.

These frameworks give AI engineers full architectural control over how agents are built, connected, and deployed. Teams typically manage their own infrastructure, observability, and production operations alongside the framework itself.

Against the five evaluation criteria, developer frameworks offer flexibility but place every governance burden on the engineering team. In theory, deterministic process control, auditability, human-in-the-loop design, and data sovereignty are all achievable. But each requires dedicated engineering resources to design, build, test, and maintain. That overhead compounds as workflows grow more complex and span more enterprise systems.

For isolated, narrowly scoped use cases where consistent outcomes are less critical, developer frameworks could be a good fit. But for enterprise-wide orchestration, where the same deterministic controls must hold across finance, procurement, HR, and operations simultaneously, the build-and-maintain requirement is not realistic. Organizations with large, dedicated AI engineering teams regularly choose purpose-built orchestration platforms for this reason: the scope and operational complexity of enterprise-wide deterministic control is not something most teams can sustain in-house.

Cloud-Native Agent Services

Where developer frameworks require teams to build and host everything, cloud-native agent services shift infrastructure and operations to the provider. These are managed offerings from major cloud providers. Azure AI Agent Service, Amazon Bedrock Agents, and Google's Vertex AI Agent Builder each provide multi-agent capabilities within their respective cloud environments.

These services include managed infrastructure, native identity and access management integration, and built-in monitoring. Agent governance, logging, and policy enforcement are handled by the provider's own control plane.

Against the five evaluation criteria, cloud-native services deliver strong auditability and human-in-the-loop capabilities within their respective ecosystems. However, deterministic process control varies by provider, data sovereignty is bound to that provider's infrastructure, and time to value depends on how deeply your existing stack aligns with the chosen cloud.

One risk in this category is easy to overlook: governance portability. When audit logs, compliance controls, and human-in-the-loop configurations are built inside a single provider's control plane, that governance architecture cannot migrate cleanly.

So if your cloud strategy changes, or if your organization needs to orchestrate across environments, the governance layer may need to be rebuilt from the ground up. If your cloud strategy might change, or if you want to select agents by capability and cost rather than by provider, that lock-in is worth weighing carefully.

Cloud-native agent services are well-suited to organizations standardized on a single cloud environment and looking to build agent capabilities within that ecosystem.

SMB Automation Tools

For organizations that don't need cloud-scale infrastructure or custom agent architectures, SMB automation tools offer a lighter-weight entry point. Tools like Zapier and Make provide app-to-app workflow automation with AI features for small and mid-sized businesses.

These tools let teams connect applications, trigger actions based on events, and add AI-powered steps such as classification or summarization to existing workflows.

Governance models in this segment focus on automation-level controls such as permissions, execution logs, and error handling. Delivery is typically SaaS-only.

Against the five evaluation criteria, SMB automation tools provide basic auditability and fast time to value for simple workflows. However, they are not designed for deterministic multi-system orchestration, enterprise-grade human-in-the-loop controls, or data sovereignty requirements common in regulated industries.

SMB automation tools are well-suited to teams that need to connect a handful of applications and automate repetitive tasks without dedicated IT resources or custom engineering.

Elementum’s Enterprise Workflow Orchestration

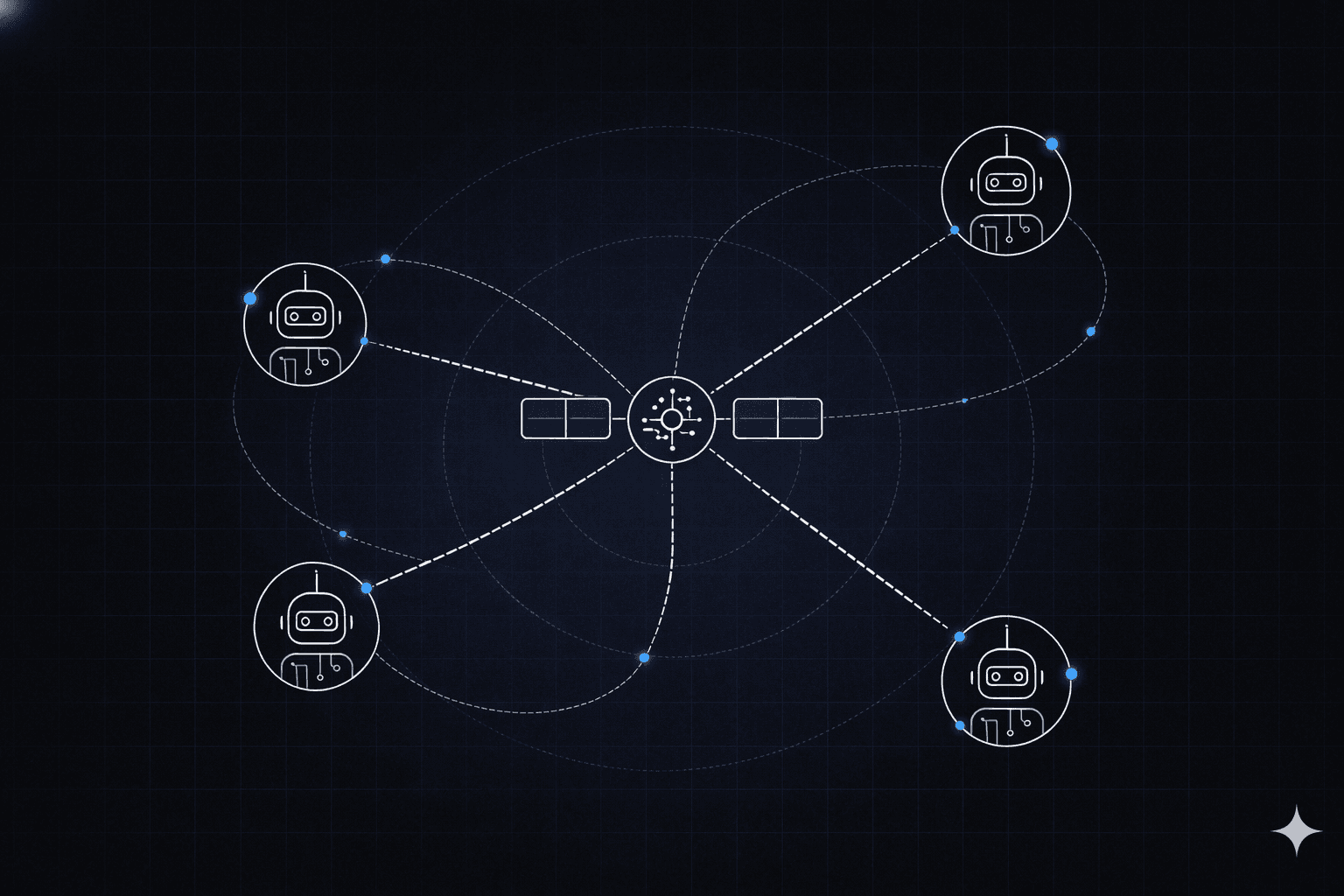

The three categories above each serve a defined use case but leave gaps in the enterprise evaluation criteria, particularly around deterministic control, data sovereignty, and cross-system governance. Elementum is an enterprise workflow orchestration system designed to close those gaps: AI agents, business rules, and human oversight function as equal components of every process.

The system is built on three architectural pillars: Open Orchestration, a Deterministic Workflow Engine, and Zero Persistence Architecture. Each addresses a failure mode that arises in agent-only deployments.

The sections that follow cover those three pillars along with two additional platform capabilities: the Single Front Door deployment model and the company's customer and partnership ecosystem.

Open Orchestration

Elementum is model-agnostic and cloud-agnostic. The system is pre-integrated with OpenAI, Gemini, Anthropic, Amazon Bedrock, and Snowflake Cortex, and it can adopt new models as they are released. Different models can be assigned to different workflow steps, and models can be swapped without rebuilding the workflow logic.

That flexibility also addresses a governance risk that often gets less attention than commercial lock-in. If audit logs, compliance controls, and agent governance are implemented provider-specifically, a later migration may require rebuilding governance artifacts and workflow logic.

Deterministic Workflow Engine

The Workflow Engine treats humans, business rules, and AI agents as equal first-class actors in every process. A no-code, drag-and-drop builder lets teams construct workflows in which deterministic rules handle logic requiring consistency, AI agents handle tasks requiring reasoning, and humans handle decisions requiring judgment.

One emerging pattern in enterprise architecture is to use deterministic workflows with structured decision inputs rather than allowing agents to orchestrate execution end-to-end. The stakes are high. Over 40% of agentic AI projects could be cancelled by 2027 due to unanticipated costs, complexity of scaling, or unexpected risks; outcomes that deterministic guardrails are specifically designed to prevent.

Zero Persistence Architecture

Elementum's Zero Persistence architecture uses CloudLinks to query data in real time from Snowflake, Databricks, AWS, and Azure, where it already lives. No replication, no migration, and no persistent copies. Your data remains in your environment. Elementum will never train on it, replicate it, or warehouse it.

The Snowflake partner directory notes Elementum's patented architecture as a way to help keep data within customers' secure, governed environments.

Single Front Door and Deployment Model

Beyond the three architectural pillars, Elementum also consolidates the user experience. Instead of employees navigating many disconnected agent interfaces, Elementum provides a single AI-powered interface that routes requests across functions such as HR, IT, finance, and sales to the appropriate agent and workflow, with orchestration and auditability behind the scenes.

Deployment targets 30 to 60 days to production, with time-to-value measured in weeks rather than months or years.

Customers and Partnerships

Elementum is a partner in the Open Semantic Interchange (OSI) initiative alongside Snowflake, Salesforce, dbt Labs, BlackRock, and other industry leaders. OSI is an open-source effort to standardize semantic metadata across the data and AI ecosystem, which directly supports Elementum's commitment to interoperability and open standards.

Sanofi uses the Elementum platform for AI-driven workflow automation. Snowflake Ventures invested in the company as part of its commitment to building governed AI capabilities within the Snowflake AI Data Cloud.

The Decision Framework

With all four categories covered, the question becomes which one fits your requirements. Mapping the right category to your environment comes down to three questions.

- Who builds and maintains it? If you have dedicated AI engineers and want full architectural control, developer frameworks may be a good fit. If operations and business teams need to own automation without permanent IT dependency, an enterprise workflow system with a no-code builder may be a better fit.

- What type of process are you orchestrating? Single-task automation across a few apps points toward SMB automation tools. Multi-system workflows spanning systems of record and data warehouses, where the same process needs consistent outcomes, point toward deterministic orchestration with human-in-the-loop controls.

- What does your data architecture require? If you are standardized on a single cloud environment and comfortable with that dependency, cloud-native services may be a good fit. If you need cloud-agnostic, model-agnostic orchestration with data queried in place rather than replicated, enterprise workflow orchestration may align more closely with that requirement.

For use cases that involve multi-system workflows, require consistent outcomes, and must satisfy audit and compliance requirements, start your evaluation with enterprise workflow orchestration rather than treating all categories as interchangeable.

Governance architecture determines whether enterprise AI deployments reach production or stall in pilot. Deterministic process control, full auditability, and data sovereignty are baseline requirements for any enterprise orchestration system.

For organizations whose requirements align with those criteria, Elementum's workflow system is built around all three. Contact us to map your workflows against the platform.

FAQs About Best AI Orchestration Tools

What Is the Difference Between AI Orchestration and AI Automation?

Automation handles single, repetitive tasks. Orchestration coordinates multiple AI agents, human decisions, and business rules to execute complex, end-to-end workflows across enterprise systems. Enterprise processes typically span many systems and handoffs, which is why orchestration requires governance that automation tools weren't designed to provide.

Do You Need Deterministic or AI-Directed Orchestration?

Use deterministic orchestration for consistency-critical processes such as compliance workflows, financial approvals, and employee onboarding. Use AI-directed orchestration for adaptive tasks such as reading unstructured documents, classification, and summarization. A production-ready architecture often combines both, with deterministic rules governing the process and AI agents applied at specific steps where reasoning adds value.

Why Can't a Single AI Model Handle All Enterprise Workflows?

Enterprise workflows span multiple systems, data sources, and decision types, which makes a single-agent approach harder to manage reliably at scale. Multi-agent architectures provide specialization, security containment, and step-level governance that single-agent approaches can't deliver as effectively. Most production deployments assign different agents to different parts of the workflow for that reason.

How Do You Integrate AI Agents With Existing Systems Without Ripping and Replacing?

Look for systems with pre-built enterprise connectors, semantic data models that map to your existing schema, and APIs designed for agent-level consumption. The right orchestration system works alongside your current infrastructure, queries data where it lives, and executes workflows across systems you already run without requiring data migration or system replacement.